Your AI Agent Just Got Core Web Vitals Superpowers

Connect Claude Code to your CoreDash field data. It finds your worst bottleneck across millions of page loads, traces the root cause in Chrome and writes the fix. Agentic web performance not a report, but the actual line of code that needs to change.

Install in 2 Minutes Start Free CoreDash Trial »AI Performance Tools Have a Data Problem

Most AI agents optimize for Lighthouse. A synthetic score on a simulated device that Google does not use for rankings. A useful web performance AI agent starts from the same data Google does: real users on budget phones, spotty connections, and continents your dev machine has never seen.

Lighthouse Is Not Your Ranking Signal

Google ranks on CrUX field data from real Chrome users over 28 days. A perfect Lighthouse score and a failing field score happen all the time. 52% of mobile sites fail at least one Core Web Vital in the field.

Blind Agents Make Blind Fixes

Without real user data, an AI agent does not know which page is slow, which element is the bottleneck, or whether its fix helped. It optimizes a simulation and calls it a day. Your actual users disagree.

Manual Investigation Takes Hours

Segment the data. Hypothesize. Run a trace. Confirm. Draft the fix. A senior performance engineer spends 2 to 4 hours per issue. Multiply that by every slow page on your site.

INP cannot be simulated in a lab at all Interaction to Next Paint measures how real users interact with your page. No synthetic tool can replicate real user behavior: where they tap, how fast they scroll, what device they hold. Lighthouse does not even report INP. If your AI agent runs Lighthouse, it is blind to your worst interactivity problems. Field data is the only source.

Two sources of truth: Field data meets browser evidence

CWV Superpowers combines CoreDash real user data with targeted Chrome traces. The field data tells it what is slow. Chrome tells it why.

CoreDash tells the agent what is slow

CoreDash tracks every page load from every real user. Every metric, attributed to the exact element causing the issue. No sampling, no caps.

When CoreDash reports a 4.2 second LCP with Load Delay consuming 52% of total time on div.hero > img.main, the agent knows exactly where to look. Not a guess. A measurement from millions of real sessions.

The skill queries 25+ CoreDash dimensions: LCP element, element type, priority state, phase breakdown, INP interaction target, LOAF scripts, CLS shifting element, device type, visitor type, network speed, 7-day trends.

Chrome tells the agent why it is slow

CWV Superpowers visits the page with mobile emulation: Fast 3G, 4x CPU throttling. It traces only the bottleneck phase that CoreDash identified.

Load Delay is the bottleneck? The agent examines the network waterfall for discovery gaps. Render Delay? It looks for blocking scripts and font loading delays.

The result: filmstrip screenshots, network waterfall, and targeted evidence that explains the root cause your field data exposed.

Proportional reasoning, not absolute thresholds

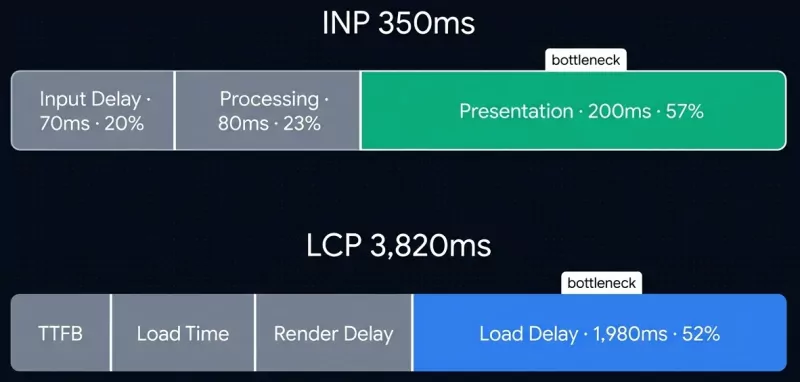

Lighthouse tells you "Render Delay is 350ms." Is that the problem? No idea. CWV Superpowers identifies the bottleneck as the phase consuming the largest percentage of total time.

INP is 350ms. Input Delay 70ms (20%), Processing 80ms (23%), Presentation 200ms (57%). Presentation is the bottleneck, even though 200ms sounds fine in isolation. Fixing it moves the needle. Optimizing Input Delay barely registers.

This prevents the most common mistake in performance work: fixing the wrong thing.

Five steps: From "something is slow" to code fix

Ask it a question. Five steps later you have a fix backed by real user evidence.

1. Discovery

Scans your CoreDash data for the worst pages and metrics. Prioritizes poor ratings, mobile, high-traffic pages, and p75 scores that hide a long poor tail.

2. Diagnosis

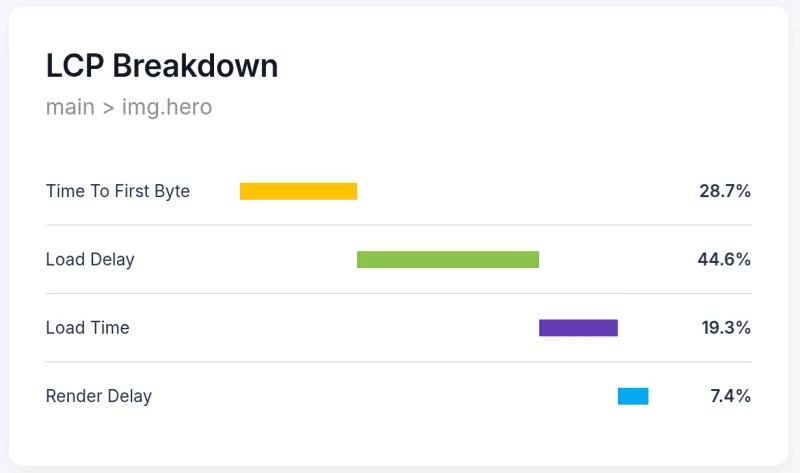

Breaks the metric into phases. LCP: TTFB, Load Delay, Load Time, Render Delay. INP: Input Delay, Processing, Presentation. Names the bottleneck by percentage.

3. Chrome Trace

Visits the page with mobile emulation. Traces only the bottleneck phase from step 2. Captures network waterfall, filmstrip, and blocking resource evidence.

4. Root Cause

Combines both evidence sources into one statement: the element, the cause, the CoreDash metrics, and what Chrome confirmed. No ambiguity.

5. Fix or Report

Your choice. Apply the code fix with file, line, element, before/after. Generate a self-contained HTML report with charts and evidence. Or both.

25+ dimensions: Every angle your field data covers

These are the actual CoreDash dimensions the agent queries. Not a summary. The full picture.

LCP (Largest Contentful Paint)

LCP element Element type Priority state TTFB phase Load Delay Load Time Render DelayINP (Interaction to Next Paint)

INP target Input Delay Processing Presentation LOAF scripts Load stateCLS (Cumulative Layout Shift)

Shifting element Shift cause Shift timingSegments

Device type Country Browser OS Connection Visitor type Page pathTrends

7-day delta 28-day baseline Regression detectionDiagnose: Phase-level breakdown for every Core Web Vital

Not just scores. Every metric broken into phases using real user attribution from CoreDash.

Fix LCP with AI: Largest Contentful Paint diagnosis

4-phase breakdown: TTFB, Load Delay, Load Time, Render Delay. Identifies which phase consumes the largest share of total time.

Element attribution: the exact LCP element, its type (image, text, background image, video), and priority state (fetchpriority, lazy loading).

Typical fixes: add preload hint, remove lazy loading from hero, optimize image format, fix render-blocking script.

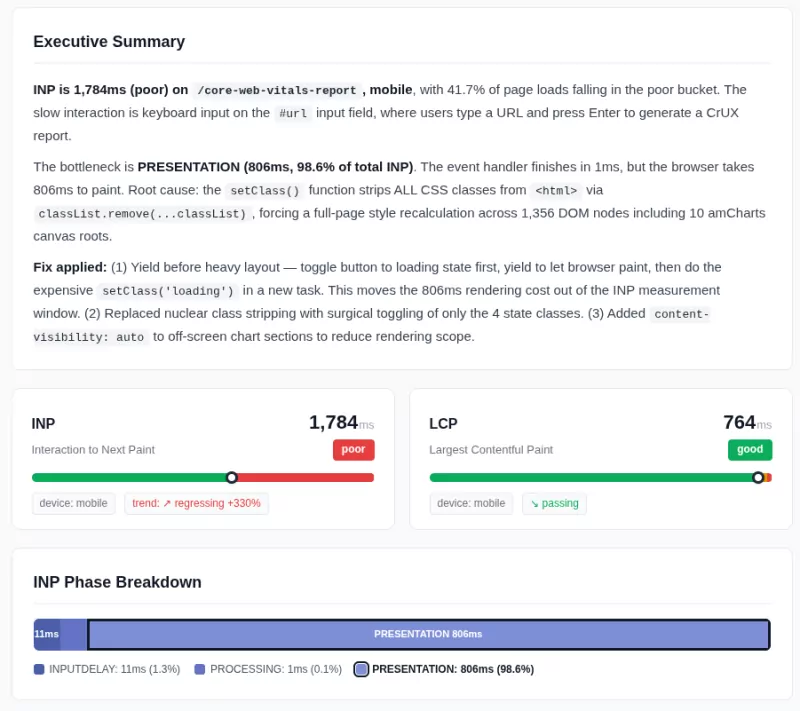

Fix INP with AI: Interaction to Next Paint diagnosis

3-phase breakdown: Input Delay, Processing, Presentation. The only metric you cannot simulate in a lab. Field data is the only source.

Script attribution: Long Animation Frames (LOAF) names the exact JavaScript file and duration. Plus the page load state when the interaction happened.

Typical fixes: yield to main thread, defer evaluation, split event handlers, content-visibility for large DOMs.

CLS: Cumulative Layout Shift

5 cause patterns: images without dimensions, font swaps, dynamically injected content, late-loading resources, CSS animations on layout properties.

Cross-dimensional: compares mobile vs desktop, new vs repeat visitors, fast vs slow networks to narrow the cause.

Typical fixes: add width/height, font-display: optional, min-height reservation, use transform instead of top/left.

What a Root Cause Statement Looks Like

Not "consider optimizing your images." This is the actual output. Specific enough to review and merge.

Root cause:

The LCP image div.hero-banner > img.product-main on /product/running-shoes-42 is discovered 1,980ms late because it lacks a preload hint and has no fetchpriority="high".

CoreDash evidence:

LCP is 3,820ms (poor) on mobile, p75. Load Delay is the bottleneck at 1,980ms (52% of total). Priority state: 3 (not preloaded). Trend: worsening +340ms over 7 days.

Chrome evidence:

Network waterfall shows 1,940ms gap between HTML first byte and image request. Image referenced only in CSS, invisible to preload scanner.

Fix:

Add <link rel="preload" href="/images/hero.jpg" as="image" fetchpriority="high"> to templates/product.html line 12. Set fetchpriority="high" on the img element at line 47.

Generic AI advice:

"Consider adding fetchpriority to your LCP image and ensure proper preloading of critical resources."

CWV Superpowers:

Element: div.hero-banner > img.product-main

File: templates/product.html, line 47

Evidence: 52% of LCP time in Load Delay (CoreDash p75). 1,940ms discovery gap (Chrome waterfall).

Fix: 2-line code change with before/after diff.

Compare: How CWV Superpowers stacks up

Different tools solve different problems. Here is what each one actually does.

| Capability | CoreDash + CWV Superpowers | Chrome DevTools MCP | PSI / Lighthouse MCP |

|---|---|---|---|

| Data source | Real users (28 days field data) | Single lab session | Simulated single load |

| INP measurement | ✓ Real interactions | ✗ No real users | ✗ Not measured |

| Phase breakdown | ✓ LCP, INP, CLS phases | ~ Manual analysis | ✗ Score only |

| Element attribution | ✓ Exact element + priority | ~ If you know where to look | ~ Generic suggestions |

| Proportional reasoning | ✓ Bottleneck by % | ✗ Absolute values | ✗ Absolute values |

| Segment comparison | ✓ Device, country, browser | ✗ Single config | ✗ Single config |

| Trend detection | ✓ 7-day delta | ✗ Point-in-time | ✗ Point-in-time |

| Chrome tracing | ✓ Targeted by phase | ✓ Full access | ✗ No browser |

| Code fixes | ✓ File + line + diff | ~ Agent-dependent | ~ Generic advice |

Note: Chrome DevTools MCP is complementary. CWV Superpowers uses it for targeted tracing after field data identifies the bottleneck. They work best together.

Reports: Drop them in Slack, attach to Jira

Self-contained HTML. No dependencies. No build step. One file with everything inline.

Full Report (with Chrome)

Color-coded metrics cards, phase breakdown charts, filmstrip screenshots at key moments (first paint, LCP, loaded), network waterfall SVG, root cause analysis, and the recommended fix with before/after code.

RUM-Only Report

Same metrics cards and phase breakdown, plus element attribution and root cause analysis. No filmstrip or waterfall, but diagnosis quality is identical because field data is the source of truth.

Works with any MCP client

Claude Code: Full skill with automated workflow. Discovery, diagnosis, Chrome tracing, code fixes, and reports. Recommended.

Cursor: Plugin installation with CoreDash MCP. Full diagnosis and code fixes inside your editor.

VS Code, Windsurf, Gemini CLI: Any client supporting HTTP MCP servers connects to CoreDash. Same field data, same attribution.

Client Success

Don't just take my word for it

VP Product, Expedia Group

"We had 80+ third-party scripts and were failing CWV on every major property. Arjen got us passing without touching our ad revenue."

CTO, DPG Media

"He found bottlenecks in our component library that we'd missed for two years. Performance gains were visible within days."

Head of Digital, Rituals

"We used to break performance every other sprint. He set up budgets in our pipeline. Haven't had a regression since."

VP Engineering, Loop

"Mobile load time down 800ms. 7% lift in checkout conversion. The ROI justified the investment immediately."

Engineering Manager, Zalando

"Every other audit we've had gave us a list of problems. This one told us exactly what to fix first and why."

Head of Platform, Adevinta

45% reduction in blocking time across 15 marketplaces. INP from 440ms to 64ms on Fotocasa alone. Google wrote up the results on web.dev.

VP Engineering, People Inc

"Seven brands, seven different stacks. Every single one got faster. No compromises on what makes each property unique."

Head of Engineering, Swift

"Layout shift on checkout eliminated entirely. Went from 0.4 to 0.02 CLS across mobile and desktop."

Product Lead, Miro

"Our dashboards were choking on main-thread work. 25% reduction in CPU usage. Users noticed immediately."

Lead Developer, Alza

"Transferred knowledge to our engineering team. We can now diagnose and resolve performance issues independently."

Running in 2 Minutes

Automated Core Web Vitals diagnosis in your terminal. You need a CoreDash account with data flowing. The free tier works.

Claude Code

claude mcp add --transport http coredash \

https://app.coredash.app/api/mcp \

--header "Authorization: Bearer cdk_YOUR_API_KEY"

/plugin marketplace add corewebvitals/cwv-superpowers

/plugin install cwv-superpowers@cwv-superpower

claude --chrome

Find my biggest CWV issue and fix it. Get your API key from CoreDash → Project Settings → API Keys (MCP). Shown once. Stored as SHA-256 hash. Read-only.

Cursor

/plugin-add cwv-superpowers

Add CoreDash to .cursor/mcp.json:

{

"mcpServers": {

"coredash": {

"url": "https://app.coredash.app/api/mcp",

"headers": {

"Authorization": "Bearer cdk_YOUR_API_KEY"

}

}

}

} Other MCP Clients

Endpoint: https://app.coredash.app/api/mcp

Header: Authorization: Bearer cdk_YOUR_API_KEY

Works with VS Code (Copilot agent mode), Windsurf, Gemini CLI, Claude Desktop, and any HTTP MCP client. One MCP web performance endpoint, every agent.

Frequently Asked Questions

Do I need Chrome running to use CWV Superpowers?

No. Chrome tracing is optional. Without it you get full field data diagnosis, phase breakdowns, element attribution, and code fix suggestions based on CoreDash data alone. Chrome adds filmstrip screenshots, network waterfall, and visual confirmation of the root cause. Both modes generate reports.

How is this different from running Lighthouse in my AI agent?

Lighthouse runs a single synthetic load on your machine. CWV Superpowers uses 28 days of real user data from CoreDash: actual devices, actual networks, actual interactions. It measures INP from real user taps (Lighthouse cannot). It compares segments (mobile vs desktop, India vs US). And it uses proportional reasoning to find the bottleneck phase, not just absolute scores.

Which AI coding agents are supported?

Any AI coding agent for web performance that supports MCP (Model Context Protocol) servers. Claude Code has a dedicated skill with automated 5-step workflow. Cursor, VS Code (Copilot agent mode), Windsurf, Gemini CLI, and Claude Desktop connect via the CoreDash HTTP MCP endpoint. The field data and attribution are identical across all clients.

Is CoreDash free?

CoreDash has a free tier that works with CWV Superpowers. You need data flowing from your site (add the CoreDash script tag). The free tier has no sampling and no page view caps. API keys for MCP access are available on all plans.

Can I use this for client sites?

Yes. For each CoreDash project you can create unlimited dedicated MVP API Keys . Add CoreDash to each client site, generate a read-only API key and configure your MCP client. The agent sees only the data for that project. CWV Superpowers is MIT licensed, so there are no restrictions on commercial use.

Open Source. No Lock-in.

Core Web Vitals automation you can inspect and extend. The orchestrator, the diagnosis modules, the Chrome tracing logic, and the report templates are all on GitHub. Read how it works. Fork it. Extend it. Contribute.

Start Your Free Trial View on GitHub