Core/Dash Dimension: Custom Labels & Segmentation

Measure performance where it counts: by A/B variant, business page type, and login state, not just by URL.

Custom Segmentation in CoreDash

Technical dimensions like country and device type are built from browser signals. CoreDash collects them automatically. The three dimensions covered here are different: Page Label, A/B Test, and Logged In Status are user-defined. You set them by assigning a window variable in your own code before CoreDash runs.

That shift from automatic to intentional is the whole point. Your application knows things the browser cannot infer: which checkout variant a user is seeing, whether the current URL is a product detail page or a landing page, whether the user is authenticated. Passing that context to CoreDash means your performance data reflects how your business actually works.

Page Label (lb)

The Page Label dimension lets you group pages by business function rather than URL structure. Define it like this:

window.__CWVL = 'mypagelabel';

Typical values: checkout, product-detail, landing-page, category, search-results, account. The value is an arbitrary string you control.

Why this matters

URL-based analysis has a fundamental scaling problem. A large e-commerce site may have 50,000 product detail pages. Their URLs look like /products/blue-widget-32oz and /products/red-gadget-xl. These are the same template, the same business function, the same optimization target. Analyzing them one URL at a time is not useful. Grouping them under product-detail gives you a single performance profile for the entire product catalog.

Page Label also separates pages that serve different performance budgets. A checkout page has one acceptable LCP threshold because it carries direct revenue. A blog post has a different tolerance. A landing page running paid traffic has zero tolerance for slow LCP because every millisecond is costing you ad spend.

Once you label pages by business function, you can set different alert thresholds in CoreDash per label and route the right alerts to the right teams.

A/B Test (ab)

The A/B Test dimension holds a label you assign to the current variant a user is experiencing. Define it like this:

window.__CWAB = 'my page version';

The value is arbitrary. variant-a and variant-b are obvious choices, but you can use any string that maps to your experimentation platform's variant identifiers.

Why this matters

A/B tests are one of the most common sources of unintended performance regressions. Variant B ships a new hero image carousel. Variant B loads a third-party recommendation widget. Variant B includes an extra round of React hydration. All of these carry a performance cost that your experiment tooling almost certainly does not measure.

Most experimentation platforms track conversion rates and revenue. They do not track p75 LCP or INP. If variant B converts 2% better but loads 400ms slower on mobile, you need to know that before rolling it out to 100% of traffic. The performance cost may erase the conversion gain over the next quarter as users lose patience.

With __CWAB set, open CoreDash, filter by ab = variant-b, and compare Core Web Vitals side by side with the control. I have seen A/B tests where the winning variant had a 600ms worse p75 LCP than the control because it loaded a heavier font. The business team saw the conversion lift; they did not see the performance regression. That is what this dimension prevents.

Logged In Status (li)

The Logged In Status dimension records whether the current user is authenticated. Define it like this:

window.__CWVLI = 1; // logged in window.__CWVLI = 0; // logged out

Why this matters

Logged-in users receive a fundamentally different page than anonymous visitors. Their requests bypass many CDN cache layers. The server runs database queries for personalized content: the user's cart, their order history, their saved items. That server-side work adds directly to TTFB.

On the frontend, authenticated pages often load more JavaScript: account widgets, notification systems, shopping cart reactivity. They may also skip the prerendering or edge caching that makes anonymous pages fast. The result is that logged-in users often see slower performance than anonymous users, yet logged-in users are typically your highest-value customers. They have already converted. They are the ones you most need to retain.

Without the li dimension, slow authenticated performance hides inside your aggregate numbers. Your anonymous LCP might be 1.8s while your logged-in LCP is 3.4s. The aggregate reads as 2.3s and looks acceptable. Split by li and the picture changes completely.

Implementation

All three dimensions use the same pattern: set a window variable before the CoreDash snippet executes. Place them in a script tag in your document head or in your application's initialization code:

// Set all three based on your app state window.__CWVL = 'checkout'; // page label window.__CWAB = 'variant-b'; // A/B test variant window.__CWVLI = 1; // logged in

The label values are strings (except __CWVLI which takes 1 or 0). Keep them consistent across your codebase. If you use product-detail in one template and productDetail in another, CoreDash treats them as two separate segments and your data fragments. Pick a convention and enforce it.

Combining all three

The real value appears when you stack these dimensions together. You are running an A/B test on your checkout page for logged-in users. You want to know if variant B makes the authenticated checkout experience faster or slower.

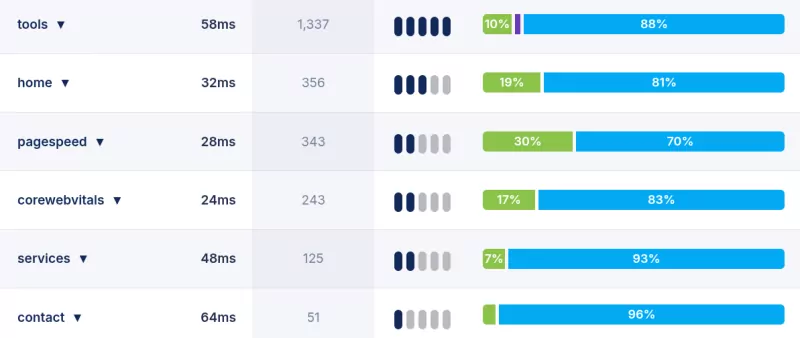

In CoreDash, filter by ab = variant-b plus lb = checkout plus li = 1. That gives you the performance of your checkout variant for authenticated users specifically. No other monitoring tool surfaces that combination without custom engineering work on your side.

Standard technical dimensions tell you what the browser experienced. Custom dimensions tell you what the business experienced. A 400ms LCP regression means something very different on a landing-page running paid traffic versus a blog post. These distinctions matter for prioritization, and prioritization is where performance work either succeeds or stalls.