Core/Dash Dimension: Repeat Visitor

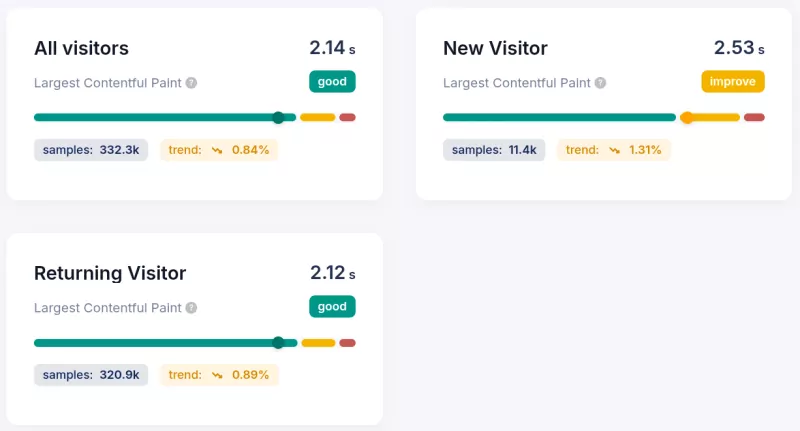

Separate new and returning visitor performance to find where cold-cache load times are dragging down your real user data.

Dimension: User Behavior: Repeat Visitor (fv)

The Repeat Visitor dimension splits your performance data into two populations: users who have visited your site before and users who have not. The engineering difference between these groups is browser cache. A returning visitor loads your fonts, scripts, and images from disk. A new visitor fetches every byte from the network.

This matters because your aggregated LCP score is a weighted average of both. If 40% of your sessions are new visitors, their cold-cache load times are pulling your p75 up. Without this dimension, you cannot tell whether an LCP regression is a real infrastructure problem or a temporary spike in new user acquisition.

Why the Performance Gap Is Larger Than You Expect

The browser cache eliminates entire request chains for returning visitors. On a typical content site, a repeat visitor skips the DNS lookup, TCP handshake, TLS negotiation, and server response for every cached asset. The LCP resource itself is often served from memory cache in under 5ms instead of taking 200ms to 800ms over the network. That is not a marginal improvement: it is a structural difference in how the page loads.

In CoreDash data across monitored sites, returning visitors typically show LCP scores 35% to 60% lower than new visitors on the same pages. The gap is widest on image-heavy pages where the hero image is large and the origin server is geographically distant from the user. On pages with server-side rendering and a text LCP element, the gap narrows because text load delay is near zero for both groups.

INP differences between the two groups are smaller but still present. New visitors often trigger more JavaScript parsing on first load as module bundles are evaluated for the first time. Returning visitors benefit from V8's code cache, which stores compiled bytecode and skips the parse-and-compile step entirely. On JavaScript-heavy pages, this can shave 50ms to 150ms off processing time.

Reading the Three Values

0: Repeat Visitor

The browser reported that this is not the user's first session on your origin. Cached resources are available. On most marketing and editorial sites tracked in CoreDash, repeat visitors make up 55% to 70% of all sessions. Their performance data is your warm-cache baseline: the best-case scenario for real users who know your site. If your LCP is poor here, the problem is not the cache. Look at render-blocking resources, server response time, or render delay instead.

1: New Visitor

No cache. The browser fetches every resource from the network. This is your cold-cache worst case, and it represents the first impression for every user who finds you through organic search, a paid ad, or a social share. New visitors typically represent 30% to 45% of sessions. Their LCP scores run 300ms to 700ms higher than repeat visitors on image-based pages. If your new visitor LCP fails the 2.5s threshold but your repeat visitor LCP passes, your optimization target is clear: reduce the size and delay of the LCP resource itself, because you cannot rely on cache for this audience.

2: Not Measured

CoreDash could not determine visit type for this session. This typically occurs when the browser blocks the storage access required to distinguish new from returning visitors, or when a privacy-focused browser configuration prevents the check. On most sites, this bucket is under 5% of sessions. Treat it as a noise floor rather than a segment to optimize for.

Debugging Workflow

- Establish your baseline split: Open the Repeat Visitor dimension in CoreDash and note the percentage of new versus repeat sessions. If new visitors are above 50% of traffic, cold-cache performance is your dominant user experience and must be the primary optimization target.

- Compare LCP by visit type: Filter to new visitors only and record the p75 LCP. Then filter to repeat visitors and record the same metric. A gap above 500ms points to asset size or network fetch time as the bottleneck. A gap below 200ms suggests render-side issues that affect both groups equally.

- Target the LCP resource directly: For new visitors with slow LCP, the fix is reducing the resource load time. Compress the LCP image, serve it from a CDN edge node close to your users, and apply

fetchpriority="high". These gains persist regardless of cache state. Do not rely on caching to compensate for an oversized or slowly served LCP asset. - Validate with the Navigation Type dimension: Cross-reference against the Navigation Type dimension. Reload and back-forward navigations skew toward repeat visitors. If your repeat visitor LCP looks unexpectedly slow, a high proportion of reload navigations (where cached resources are revalidated rather than served directly) may be the reason.

Engineering Rule of Thumb

- New visitor LCP target: Under 2.5s at p75. This is harder to hit than repeat visitor LCP and requires actual infrastructure work: CDN, image optimization, and correct fetch priority.

- Acceptable gap between new and repeat visitor LCP: Up to 400ms. A larger gap indicates that your site depends on browser cache to pass Core Web Vitals, which means first impressions are failing.

- Not Measured below 5%: If this bucket grows above 10%, investigate whether a cookie consent implementation or storage permission change is blocking visit-type detection.

The Repeat Visitor dimension is one of the first filters I apply when a site shows a borderline pass on LCP. Aggregate field data hides the real story. Splitting by visit type immediately shows whether the optimization work is solid or whether the site is coasting on cache hits from a loyal returning audience while failing every new user who arrives from search.