Core/Dash Dimension: Device Type

Debug the mobile performance gap by splitting your Core Web Vitals data across device form factors.

Dimension: Device Type (d)

The Device Type dimension splits your Real User Monitoring data into two categories: mobile and desktop. This is the single most important first filter in any performance investigation because mobile and desktop are fundamentally different computing environments. Different CPUs, different network conditions, different viewport sizes, different browser engines.

If you are looking at aggregate Core Web Vitals without filtering by device type, you are averaging two populations that have almost nothing in common. That average is misleading at best.

The Mobile Performance Gap

Mobile devices account for roughly 62% of global web traffic according to Statista (2025). Yet mobile consistently underperforms desktop. According to the 2025 Web Almanac, only 48% of mobile origins pass all three Core Web Vitals compared to 56% on desktop. That is an 8 percentage point gap.

The gap exists because mobile devices face three constraints that desktops do not:

- CPU throttling: A mid-range Android phone has roughly 3-5x less processing power than a desktop. JavaScript that executes in 50ms on desktop may take 200ms on mobile, pushing INP past the "good" threshold.

- Network latency: Mobile connections (4G/5G) have higher round-trip times and more variance than wired connections. This inflates TTFB and LCP Load Delay.

- Viewport size: Smaller screens change which element becomes the LCP. Your desktop hero image may shrink below a text block on mobile, completely changing the optimization target.

CoreDash Device Type Distribution

Across CoreDash projects, the typical traffic split is 65% mobile and 35% desktop. E-commerce sites skew heavier toward mobile (70-75%), while B2B SaaS products often see a 50/50 split or even desktop dominance.

The performance gap in CoreDash data mirrors the global trend. Mobile p75 LCP averages 2.8s compared to 1.9s on desktop. For INP, the gap is even larger: mobile p75 sits around 220ms while desktop hovers near 120ms.

Metric-Specific Analysis

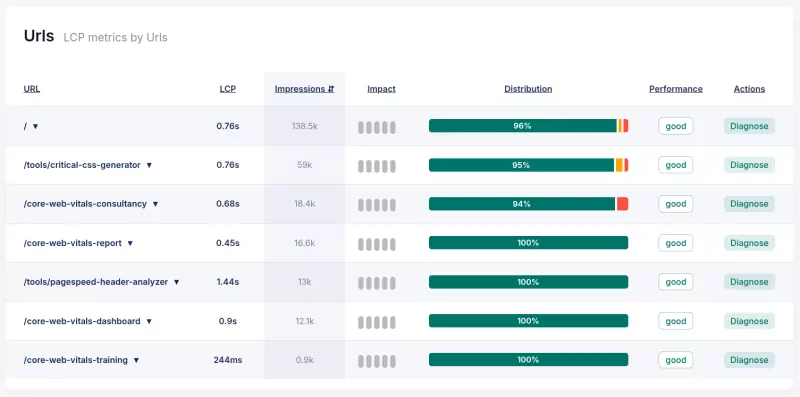

Largest Contentful Paint (LCP)

Mobile LCP is almost always worse than desktop. The primary cause is Load Delay: mobile browsers discover the LCP image later because the HTML takes longer to arrive (higher TTFB) and the preload scanner competes with more resource contention on a slower CPU. If your desktop LCP is under 2.0s but mobile exceeds 3.0s, the issue is rarely the image file itself. It is the delivery pipeline.

Interaction to Next Paint (INP)

This is where the device gap hits hardest. JavaScript event handlers that feel instant on a desktop i7 can block the main thread for 300ms+ on a Snapdragon 665. Filter by mobile, sort by INP impact, and you will find the exact interactions that break on real phones. I see this constantly: developers test on MacBook Pros and ship interactions that are unusable on the devices 65% of their users actually carry.

Cumulative Layout Shift (CLS)

CLS differences between device types usually trace back to responsive design. Ad slots that reserve space on desktop may collapse or resize on mobile. Font fallback metrics that align on desktop cause visible shifts on smaller viewports. Web fonts render differently across mobile and desktop browsers, and the physical pixel density affects sub-pixel rounding.

Debugging Workflow

- Start every investigation with the device filter: Before looking at any other dimension, split by Device Type. If your aggregate LCP is 2.5s, you might find desktop at 1.8s and mobile at 3.1s. The "problem" is exclusively mobile.

- Compare distributions, not just p75: Check the good/needs-improvement/poor distribution for each device type. A desktop with 85% good and a mobile with 45% good tells a completely different story than the p75 alone.

- Combine with other dimensions: Once you have isolated the device type, add a second filter. Device Type + Country reveals if the mobile gap is global or concentrated in regions with slower networks. Device Type + Navigation Type shows if mobile back-forward navigations are cached properly.

Engineering Rule of Thumb

- Mobile LCP under 2.5s: This is the threshold Google uses for "good." If your desktop passes but mobile fails, focus on reducing Load Delay (fetchpriority, preload) and TTFB (edge caching, CDN).

- Mobile INP under 200ms: Test every interactive feature on a real mid-range Android device. Chrome DevTools CPU throttling (4x) approximates this, but real device testing is better.

- Never optimize only for desktop: If your mobile traffic exceeds 50% (and it almost certainly does), mobile performance is your search ranking signal. Google uses mobile CrUX data for ranking.

Device Type is not a nice-to-have filter. It is the first question you ask: "Is this a mobile problem or a desktop problem?" Every optimization decision flows from that answer.